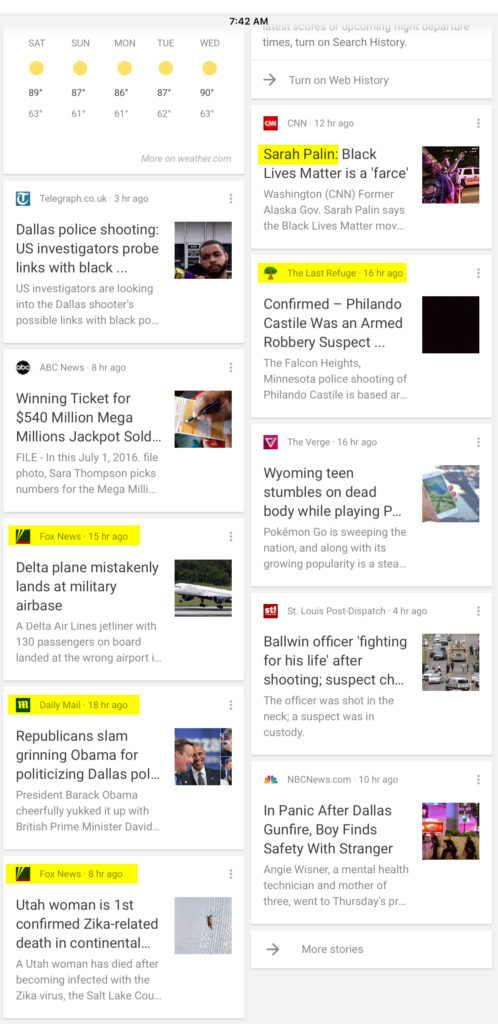

google just served me up the following “news” without knowing my full identity:

this appears to be their default offering for an English speaker in Los Angeles.

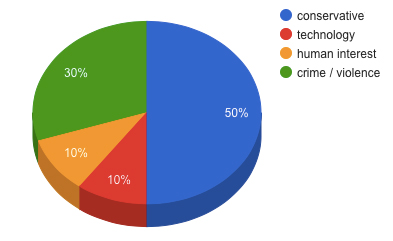

we have two poltically neutral stories that will lead the user to the conservative Fox News site, a third from the conservative Daily Mail, a fourth from a conservative blog, a fifth devoted to the opinion of a conservative celebrity. this makes 50% of the options links to a conservative worldview.

there are no options for liberal commentary nor any blurbs that point to politically liberal news sites; e.g., the times, the guardian, talkingpointsmemo, msnbc, etc.

i’m certain that Google’s algorithms chose these offerings because they have a higher clickthrough rate (engagement rate). i’m fairly certain that this CRT is based on conservative sites being more effective at evoking the feelings of the demographic that still gets its news from TV and newspaper sites.

computers are like slot machines. people click, swipe, tap until their brains release chemicals that make them feel. anger and fear are strong feelings. they are exciting. by contrast, problem-solving is energy-intensive; it’s literally hard on the brain and not exciting.

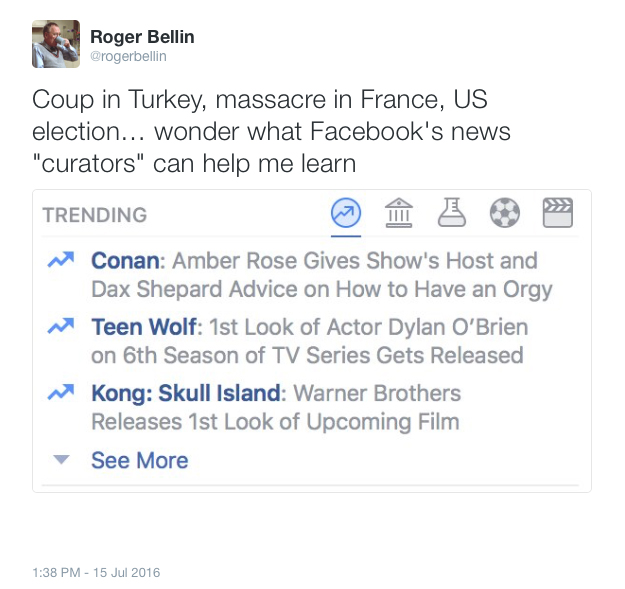

most people get their “news” from facebook and google. while alphabet (google) has recently funded news innovation in europe, it’s because the EU has repeatedly fined them. to the best of my knowledge, facebook has funded no such effort – if anything, they’ve taken a rather different stance towards journalism.

neither newspapers nor television news bureaus are faring well.

some people in silicon valley believe that a coming techno-utopian society will make work obsolete and guaranteed minimum income will provide humans with the time to spend all day clicking, swiping and tapping.

i don’t know the odds for that scenario coming true but, based on the 100% failure rate of the Marxist techno-utopia, i’m going to guess there’s a better than 0% chance our future will not be so pleasant.

instead, it’s possible that a society of clickbait-heads will increasingly make bad decisions that feel good in the short-term but feel very bad in the long-term. this cause and effect has been studied under the rubric of impulse control. the result is that our current networks abet groupthink and it’s bad news for all involved.

manias are recurring and often cataclysmic. Brexit is a walk in the park compared to a Donald Trump presidency.

our history suggests that human survival is “hard”. it stands to reason that “hard news” is essential for survival.

hard news is difficult to consume. it requires that the recipient understand a problem that does not have an easy solution – otherwise, it would have been solved.

hard news is also difficult to produce. someone has to identify which facts are relevant, gather them, test them for their veracity and then present them in an easy-to-understand narrative – let alone one that is exciting.

there is no computer that can do this job and the likelihood of such an artificial intelligence in our lifetimes is equivalent to the likelihood of singularity: slim. assuming we survive the century.

facebook in particular has done quite well with its slot machine / “mousetrap.” it might improve its long term fortunes (i.e., keeping the mice alive) if it learns how to funnel the neurochemicals it so effectively elicits towards problem-solving. in the forseeable future, such innovation will require that it spend money on people. specifically, on journalists.

postscript july 15, 2016

unrelated / related:

postscript august 27, 2016

Inside Facebook’s (Totally Insane, Unintentionally Gigantic, Hyperpartisan) Political-Media Machine:

On July 31, a Facebook page called Make America Great posted its final story of the day…

Readers who clicked through to the story were led to an external website, called Make America Great Today, where they were presented with a brief write-up blended almost seamlessly into a solid wall of fleshy ads. Khan, the story said — between ads for “(1) Odd Trick to ‘Kill’ Herpes Virus for Good” and “22 Tank Tops That Aren’t Covering Anything” — is an agent of the Muslim Brotherhood and a “promoter of Islamic Shariah law.” His late son, the story suggests, could have been a “Muslim martyr” working as a double agent. A credit link beneath the story led to a similar-looking site called Conservative Post, from which the story’s text was pulled verbatim. Conservative Post had apparently sourced its story from a longer post on a right-wing site called Shoebat.com.

…Then, of course, there’s the content, which, at a few dozen posts a day, Nicoloff is far too busy to produce himself. “I have two people in the Philippines who post for me,” Nicoloff said, “a husband-and-wife combo.” From 9 a.m. Eastern time to midnight, the contractors scour the internet for viral political stories, many explicitly pro-Trump. If something seems to be going viral elsewhere, it is copied to their site and promoted with an urgent headline.

postcript august 29, 2016

Facebook Purges Journalists, Immediately Promotes a Fake Story for 8 Hours

postscript october 20, 2016

What’s Missing From Mark Zuckerberg’s Memo on Peter Thiel

postscript november 11, 2016

Mark Zuckerberg denies that fake news on Facebook influenced the elections

[edit: internal documents contradict this claim as of sep 6, 2018: “Facebook knew about the Russian disinfo campaign DURING the 2016 election, but didn’t take action because of organizational dysfunction.”]

postscript november 13, 2016

Mark Zuckerberg vows more action to tackle fake news on Facebook

postscript january 11, 2017

Facebook says it’s going to try to help journalism ‘thrive’

postscript february 17, 2017

The Mark Zuckerberg Manifesto Is a Blueprint for Destroying Journalism

postscript april 17, 2017

postscript may 25, 2017

APPLE NEWS IS GETTING AN EDITOR IN CHIEF

Morning Media has learned that Apple has given the job — a new position at the Cupertino-based company — to Lauren Kern, one of New York magazine’s most high-ranking editors and a former deputy editor at The New York Times Magazine. It’s unclear what exactly the role will entail, and Kern declined to comment.

postscript oct 6, 2017

Ex-Google strategist: “The dynamics of the attention economy are structurally set up to undermine the human will.”

and:

All of which, Williams says, is not only distorting the way we view politics but, over time, may be changing the way we think, making us less rational and more impulsive. “We’ve habituated ourselves into a perpetual cognitive style of outrage, by internalising the dynamics of the medium,” he says.

It is against this political backdrop that Williams argues the fixation in recent years with the surveillance state fictionalised by George Orwell may have been misplaced. It was another English science fiction writer, Aldous Huxley, who provided the more prescient observation when he warned that Orwellian-style coercion was less of a threat to democracy than the more subtle power of psychological manipulation, and “man’s almost infinite appetite for distractions”.

postscript #this-is-fine-everything-is-fine sep 6, 2018

Six months after FB re-engineered news feed to promote “broadly trusted” news sources, the top 10 stories on the network include an aggregated Daily Caller story, a Snopes-debunked Nike hoax, and 3 Ladbible posts.

postscript sep 10, 2018

somehow, i missed this when it came out, approx. 15 months after this post was first published. former Facebook executive: “The short-term, dopamine-driven feedback loops that we have created are destroying how society works. No civil discourse, no cooperation, misinformation, mistruth.”

postscript nov 18, 2018

“As content gets closer to the line of what is prohibited by our community standards, we see people tend to engage with it more,” – FACEBOOK MOVES TO LIMIT TOXIC CONTENT AS SCANDAL SWIRLS, Wired

Eli Saslow, Washington Post:

“Nothing on this page is real,” read one of the 14 disclaimers on Blair’s site, and yet in the America of 2018 his stories had become real, reinforcing people’s biases, spreading onto Macedonian and Russian fake news sites, amassing an audience of as many 6 million visitors each month who thought his posts were factual. What Blair had first conceived of as an elaborate joke was beginning to reveal something darker. “No matter how racist, how bigoted, how offensive, how obviously fake we get, people keep coming back,” Blair once wrote, on his own personal Facebook page. “Where is the edge? Is there ever a point where people realize they’re being fed garbage and decide to return to reality?”

postscript dec 11, 2018

After Sundar Pichai’s day of Q&A with members of the US Congress, what Ben Collins said:

“Algorithms are predisposed to surfacing combativeness, feel-good vitriol and provincialism. It’s fixable but complicated, and it’ll make you no money.”

postscript jan 15, 2019

“Why Facebook is giving $300 million for local journalism”

postscript mar 15, 2019

One user’s experience:

Today I opened my first YouTube video on my brand new work computer. I have no stored browsing history. Look at the videos the “algorithm” recommended. This is the second time I’ve seen this happen. I’m disgusted and horrified

postscript mar 16, 2019

A year after the big algorithm change that Zuck said would focus on “time well spent,” Facebook’s dominated by the “angry” reaction (?), Fox News, and stories designed to scare people or work them up… More than a third of the most-engaged stories on the platform are false but hey engagement is up 50% so ¯\_(ツ)_/¯

postscript mar 25, 2019

I’m sure Apple News+ will be fine, but man is it weird to hear a tech company describing “human-curated news” as some high-concept innovation, and not the way news was selected and distributed for 400 years before the sorted feed era.

postscript may 11, 2020

Big Tech Has Crushed the News Business. That’s About to Change

“The digital platforms need media generally, but not any particular media company, so there is an acute bargaining imbalance in favor of the platforms. This creates a significant market failure which harms journalism and so, society.”

postscript and counterpoint may 15, 2020

Media, Regulators, and Big Tech; Indulgences and Injunctions; Better Approaches